The need for huge amounts of data to be concurrently delivered to many locations is a challenge faced by multiple global industries such as entertainment and media, governmental and military, retail and financial, educational and so many more. Although internet connectivity speeds have increased significantly, there are several scenarios where there are much better distribution methods.

Multicasting — Quick + Secure Transmit

One such method is multicasting, or the transmission from a single source to multiple points, usually widely dispersed. Multicasting is exceptionally effective when...

Wired connectivity is either unavailable or undependable, such as when trying to send to airplanes, cars, rural locations lacking “last mile” connections, soldiers in the field, and so on

A particularly Quality of Service (QoS) level is required that can only be achieved through private networks — such as large corporations with locations across many states or countries, military applications, etc.

The amount of data required to be sent is so massive that terrestrial delivery (e.g., CDN) becomes cost prohibitive, such as the delivery of feature films, often 200 GB or more, to thousands of locations as well as the prepositioning content in homes.

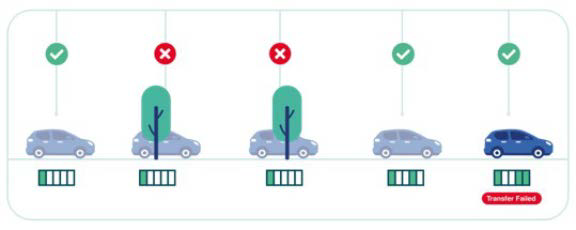

Figure 1. Single transmission: A file is transmitted once in five segments of 20% each. A car entering into a tree canopy, for example, prevents the distribution of the second and third segments and causes a failed delivery

Figure 1. Single transmission: A file is transmitted once in five segments of 20% each. A car entering into a tree canopy, for example, prevents the distribution of the second and third segments and causes a failed delivery

In these scenarios, multicast-enabled networks such as satellite, TV distribution, and even cellular networks, are superior options to quickly and securely transmit files and live video streams.

Are Those Rain Clouds?

Despite their unparalleled ability to simultaneously reach a nearly unlimited number of geographically dispersed sites, these wireless digital distribution channels are still prone to errors and data does not always reach every recipient perfectly. Three aspects of data delivery can impact success:

Degradation — Similar to tires wearing down from use, data gets corrupted during delivery.

Delay — Similar to a traffic jam, congestion on the path may keep data from arriving on time and in order.

Deletion — Like any journey, if the bridge is out, or if the path has never been mapped, the data is lost and never arrives.

When interruptions occur, they create gaps in which crucial data bits can slip through and be lost, resulting in incomplete or failed transmissions. Mobile receivers will experience gaps in line-of-sight visibility as they pass through tunnels, overpasses, or behind obstacles.

Satellite signals are vulnerable to noise, whether rain clouds dispersing radio waves or white noise caused by thermal radiation from the sun. Multicasting data from one to thousands of receivers using a broadcast network (such as satellite, television, or 5G) comes with frequently interrupted downlinks and requires application awareness. That’s because receivers scattered across the globe, at times moving at high speeds, experience different receiving conditions and timeframes. Such disruptions can last from milliseconds to minutes.

Missing or corrupt packets can cause the file or stream — a feature film, educational content, critical military intelligence, banking data, live sports and music concerts -— to be unavailable. This lack of reliability can be crucial to Hollywood movie studios, military and government agencies, major TV and news networks, and retail, all who need to be sure their crucial content arrives fully in tact every time. Interruptions are truly life-threatening in a military context.

Traditional Multicast Delivery Methods

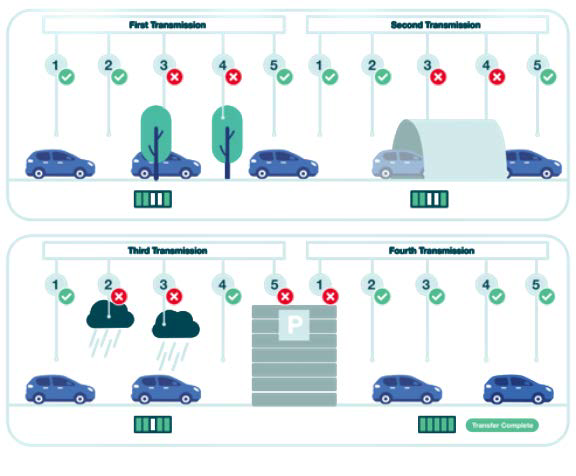

Figure 2. Carousel transmission: Again, the file is sent in five segments of 20% each. The file is re-sent continuously to prevent loss from blockages but this is unreliable and inefficient, requiring multiple

Figure 2. Carousel transmission: Again, the file is sent in five segments of 20% each. The file is re-sent continuously to prevent loss from blockages but this is unreliable and inefficient, requiring multiple

Traditionally, files were transmitted once between distributor and recipient, with both parties crossing their fingers that the entire file was received, uninterrupted and complete. This was particularly true with military operations, where no back channel is available to report missing data (as this back link would risk exposing military installations and troops to revealing their position). In such cases, the sender would transmit a file multiple times —“spraying and praying” — in the hope that, over the course of several interrupted transmissions, an entire file could be constructed.

This “carousel delivery” has a higher probability of successful file distribution than sending a single time, but it is unreliable and inefficient, in that it will likely require multiple transmissions with correspondingly higher delivery costs. This is especially true for transmissions to multiple recipients who require that the same data be sent multiple times to ensure successful distribution. More transmissions are required to ensure that 100% of recipients receive 100% of the file. The inefficiency increases and costs rise.

What was needed is a way of ensuring error free transmission. The solution involved the use of channel coding to enable errors in distribution to be corrected in the first distribution, rather than needing to send multiple files and hope that all parts eventually make it through. This is called Forward Error Correction.

What The FEC?

Error correction is not a new technology. The American mathematician Richard Hamming pioneered this field in the 1940s and invented the first error-correcting code in 1950 while trying to fix problems with the error prone punched card reader. In fact, the military was using some forms of error correction, but better schemes were required.

Modern Forward Error Correction is an effective digital signal processing technique used to enhance data reliability. It improves the bit error rate of communication links by adding redundant information (parity bits) to the data at the Transmitter which the Receiver uses to detect and correct errors that may have been introduced in the transmission link.

In the simplest form of FEC, each character is sent twice. The receiver checks both instances of each character for adherence to the protocol being used. If conformity occurs in both instances, the character is accepted. If conformity occurs in one instance and not in the other, the character that conforms to protocol is accepted. If conformity does not occur in either instance, the character is rejected and a blank space or an underscore (_) is displayed in its place.

In some ways, the mathematical algorithm behind FEC is like a Sudoku puzzle where, if a user receives enough numbers, they can work out what are the missing numbers. If an algorithm can solve for all of the missing pieces, it can complete the entire file or stream. With FEC, missing pieces are reconstructed using supplementary packets that were generated prior to missing pieces are reconstructed using supplementary pack transmission and broadcast with the original file or stream.

What does this mean? It means that if you are a leading distributor of films to cinemas and you want to stop sending content out on bulky hard drives and start sending with satellite multi-casting, for example, you need to be sure that files are received perfectly the first time they are sent. FEC is the solution, as it ensures the perfect delivery of files the first time they are sent — despite errors that may have occurred during transmissions. FEC plugs and repairs these gaps. However, not all FEC operates in the same way and not all FEC technologies are equal.

Applying The Highest Level

To understand the differences between types of FEC, we need to understand a little about how the internet protocol suite, commonly known as TCP/ IP, defines the communications protocols used to send data. TCP/ IP specifies how data should be packetized, addressed, transmitted, routed and received.

» This functionality is organized into four levels or layers:

» The Application level (provides protocols that allow software to send and receive information, such as via web browsers end email clients)

» The Transport level (where large files are broken into smaller groups for sending) » The Internet level (providing addressing for connections between independent networks)

» The Link level (providing a physical connection between devices).

Regular FEC methods (think Reed-Solomon, Viterbi, and even LDPC) work at the Link level (LL), restricting them to checking for and correcting errors in data transferred between network entities at this same level. Such FEC is unaware of the wider application and is restricted to a local area network boundary. It can only correct errors in data transferred within this boundary, such as audio and video packages, over tiny timeframes.

Accordingly, when downlinks are interrupted, data bits are lost, and LL-FEC cannot request missing parts to repair a file. The result is a broken package that needs resending and retransmitting to every receiver, creating delays and unnecessary costs.

Advanced FEC operates at the Application Level (AL). This is where file sizes are largest, the time of transmission is longest and the need for error correction is greatest. It is also where cross-boundary and more advanced behaviors occur. As a result, they can check for errors, repair data, and request retransmissions across different levels.

AL-FEC is aware and unrestricted, checking for errors and repairing data across different levels. This removes the need for retransmissions. Accordingly, AL-FEC is the highest level FEC and ensures the greatest efficiency, reliability, operational effectiveness and cost savings.

Many customers do not know they are using the lesser form, believing all FEC to be the same. You would be smart not to make that error. Please check that the FEC your organization is using is AL-FEC if you are multicasting content.

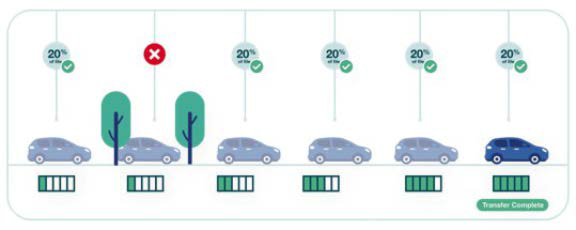

Figure 3. Be smart with Smart FEC: The file is again broken into five segments. However, all packets sent are unique FEC packets. It does not matter which segment of a given file is received. As long as five segments are received, the file can be successfully recreated.

Figure 3. Be smart with Smart FEC: The file is again broken into five segments. However, all packets sent are unique FEC packets. It does not matter which segment of a given file is received. As long as five segments are received, the file can be successfully recreated.

Get Smart

FEC, LL-FEC and AL-FEC work by solving for holes in a file or stream with supplemental data packets generated prior to transmission and the latest innovation in this field uses all supplemental packets at transmission — there is no original file data. This smart innovation increases bandwidth efficiency when multicasting content to fixed sites (removing the need for carouseling content) but it is invaluable when sending to receivers in-and-out of coverage, such as groups of moving vehicles (such as airplanes traversing spot beams). Imagine a movie file that is broken into 100 pieces and transmitted to cars within range of the transmitter. Blocked by tunnels, tree canopies, rain, etc., cars moving into and out of the reception will receive some pieces (1-9, 17-35, 54-71, etc.) and not others and almost certainly different pieces from others (5-15, 22-33, 40-65, etc.).

If the transmission is sent multiple times (carousel transmission), there is the possibility that, over time, the recipients will get enough data for even LL-FEC to generate a complete file. However, Smart FEC does not need all the original file pieces, as it can use supplemental FEC data to cover for those lost in distribution.

With Smart FEC, it would typically be enough to receive any 102 packets of data to be able to complete the file. To give you an idea of what that means, a 200 GB feature film to a theatre chain or an airline’s in-flight entertainment system will receive a complete delivery of the latest blockbuster, even if it missed 1-hour of a 12-hour transmission.

It is also vitally important for streaming live events. Smart FEC delivers a perfect recreation of the original content, but without introducing any delay. Any live event or stream can be multicast in real-time, without a single error, and accessible only by its intended recipients.

With the demands for mobility, digitization, improved terrestrial throughput and real-time streaming applications only set to increase, content distributors need to look closely at their current distribution methods and ensure they have access to the best and most complete broadband multicast/ unicast solutions on the market.

Be Smart.

Dr. Henrik Axelsson is the President of KenCast. Since joining KenCast in 2006, Henrik has held multiple roles within the company, including software development, operations, business development and management. As President, Henrik oversees the company’s technology roadmaps, its daily operations as well as its marketing, business development and sales initiatives. In concert with the Board of Directors, Henrik charts the company’s strategic direction.

Henrik received his Ph.D and M.S. in Electrical Engineering from Georgia Institute of Technology with a concentration in optimization and control theory. He holds an M.S. in Electrical Engineering from and control theory. He holds an M.S. in Electr Chalmers University of Technology, Sweden